Have You Been Robbed on the Last Mile of Sales?

It is a fair question, whether you are the seller or the customer. OK, so what is the last mile of sales? I didn't find an official definition, so I'm borrowing the concept from "the last mile of finance" between balance sheet and 10-k, and the "last mile of telecommunications" that is the copper wire from the common carrier's substation to your home or business. Let's call the last mile of sales

that part of the sales funnel in which prospects are ready to become customers, or are already customers, ready for up-selling and cross-selling.

Survey Participants were robbed on the Last Mile of Sales!

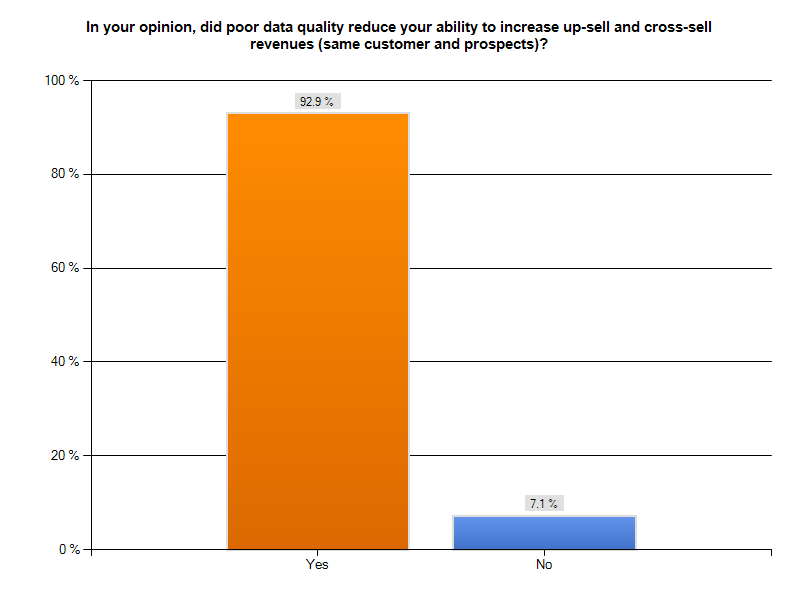

This Tuesday morning, I looked at our "Poor Data Quality - Negative Business Outcomes" survey results and I noticed a surprising agreement among participants in one sales-related area. 126 respondents, or over 90%of those responding to our question about poor data quality compromising up-selling and cross-selling, indicated they had such a problem. The graph following gives you a sense of how large a percentage of respondents had lost sales opportunities. This is a troubling statistic. Organizations spend huge sums on marketing programs designed to attract prospects and nurture them to become customers. Beyond direct monetary investment, ensuring a successful trip down the sales funnel takes time, effort, and ability. From the perspective of the seller, failing to sell more products and services to an existing (presumably happy) client is like being robbed on the last mile of sales. Your organization has already succeeded in making a first sale. Subsequent selling should be easier, not harder. From the perspective of the buyer, losing confidence in your chosen vendor because they fail to know you and your preferences, confuse you with similarly named customers, or display inept record-keeping about their last contact with you, robs you of a relationship you had invested time and money in developing. Perhaps now your go-to vendor becomes your former vendor, and you must spend time seeking an alternate source. Once confidence has been shaken, it is difficult to rebuild.

existing (presumably happy) client is like being robbed on the last mile of sales. Your organization has already succeeded in making a first sale. Subsequent selling should be easier, not harder. From the perspective of the buyer, losing confidence in your chosen vendor because they fail to know you and your preferences, confuse you with similarly named customers, or display inept record-keeping about their last contact with you, robs you of a relationship you had invested time and money in developing. Perhaps now your go-to vendor becomes your former vendor, and you must spend time seeking an alternate source. Once confidence has been shaken, it is difficult to rebuild.

What did the survey say?

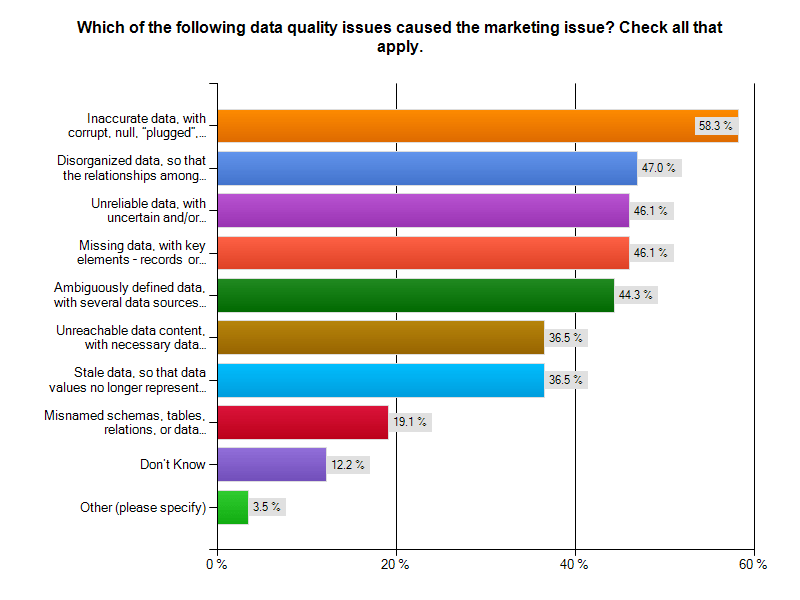

How is it possible that more than 90% of our respondents to this question lost an opportunity to up-sell or cross-sell? The next chart tells the story. It is poor data quality, plain and simple. You can read the results yourself. As a sales prospect for a lead generation service, I had a recent experience with at least one of the top four poor data quality problems.

You can read the results yourself. As a sales prospect for a lead generation service, I had a recent experience with at least one of the top four poor data quality problems.

Oops, the status wasn't updated after our last call

In the closing months of 2013, I was solicited by a lead generation firm. I asked them to contact me in the first quarter of 2014. Ten days into 2014, they called again. OK, perhaps a bit early in the quarter, but they are eager for my business. With no immediate need, I asked them to call me again in Q3-2014 to see how things were evolving. So, I was surprised when I received another call from that firm, yesterday. Had we traveled through a time-warp? Was it now mid-summer? A look out the window at the snowstorm in progress suggested it was still February 2014. The caller was the same person as last time, and began an identical spiel. I interrupted and mentioned we had only spoken a week earlier. The caller appeared to remember and agree, indicating that there was no status update about the previous call. Was this sloppy ball-handling by sales, an IT technology issue, an ill-timed database restore? Was this a 1:1,000,000 chance or an everyday occurrence? The answer to all of those questions is "I have no idea, but I don't want to trust these folks with managing my lead generation campaign". If they can't handle their own sales process, how are they going to help me with mine? What ever the cause of the gaff, they robbed themselves of a prospect, and me of any confidence I might have had in them.The Bottom Line

Being robbed on the last mile of sales by poor data quality is unnecessary, but all too common. Have you recently been robbed on the last mile of sales? Are you a seller, or a disappointed prospect or customer? Cal Braunstein of The Robert Frances Group and I would like to hear from you. Please do contact me to set up an appointment for a conversation. Whether you have already participated in our survey, are a member of the InfoGov community, or simply have an enlightening experience about how poor data quality caused you to have a negative business outcome, reach out and let us know. Published by permission of Stuart Selip, Principal Consulting LLCBad Data is a Social Disease (Part 1)

Organizational bad data is a social disease easily passed to your business partners and stakeholders With 200 completed responses in our "Poor Data Quality - Negative Business Outcomes" survey, run in conjunction with The Robert Frances Group, the IBM InfoGovernance Community, and Chaordix, it is safe to say that bad data is a social disease that can spread easily and quickly. Merriam-Webster defines a social disease as

a disease (as tuberculosis) whose incidence is directly related to social and economic factorsOK, that definition works for the bad data social disease. In this case, the social and economic factors enabling and potentiating this disease include

- Business management failing to fund and support data governance initiatives

- IT management failing to sell the value of data quality to their business colleagues,

- Business partners failing to challenge and push-back when bad data is exchanged

- Financial analysts not downgrading firms that repeatedly refile 10-Ks due to bad data

- Customers not abandoning firms that err due to bad data quality and management

Social diseases negatively affect the sufferer, their partners, and the community around them. According to our respondents:

Social diseases negatively affect the sufferer, their partners, and the community around them. According to our respondents:

- 95% of those suffering supply chain issues noted reduced or lost savings that might have been attained from better supply chain integration.

- 72% reported customer data problems, and 71% of those respondents lost business because the didn't know their customer

- 71% of those suffering financial reporting problems said poor data quality cause them to reach and act upon erroneous conclusions based upon materially faulty revenues, expenses, and/or liabilities

- 66% missed the chance to accelerate receivables collection

- 49% reported operations problems from bad data, and 87% of those respondents suffered excess costs for business operations

- 27% reported strategic planning problems, with 75% of those indicating challenges with financial records, profits and losses of units, taxes paid, capital, true customer profiles, overhead allocations, marginal costs, shareholders, etc.)

What is in Part 2?

In next week's post, we'll examine some of our survey results specific to bad data and the supply chain. A successful supply chain requires sound internal data integration and equally sound data exchange and integration across chain participants.A network of willing participants exchanging data is fertile ground for spreading the social disease of business. Expect a thought experiment about wringing the bad data quality costs out of supply chain management, and see what some supply chain experts think about the dependency of effective supply chains on high quality data.The Bottom Line

Believe that bad data is a social disease and take a stand on wiping it out. The simplest first step is to make your experiences known to us by visiting the IBM InfoGovernance site and taking our "Poor Data Quality - Negative Business Outcomes" survey. When you get to the question about participating in an interview, answer "YES"and give us real examples of problems, solutions attempted, success attained, and failures sustained. Only by publicizing the magnitude and pervasiveness of this social disease will we collectively stand a chance of achieving cure and prevention. As a follow-up next step, work with us to survey your organization in a private study that parallels our public InfoGovernance study. The public study forms an excellent baseline for us to compare the specific data quality issues within your organization. You will not attain and sustain data quality until your management understands the depth and breadth of the problem and its cost to your organization's bottom line. Bad Data is a needless and costly social disease of business. Let's move forward swiftly and decisively to wipe it out! Published by permission of Stuart Selip, Principal Consulting LLCTectonic Shifts

Lead Analyst: Cal Braunstein

Bellwether Cisco Systems Inc.'s quarterly results beat expectations while CEO John Chambers opined global business was looking cautiously optimistic. In other system news, IBM Corp. made a series of hardware announcements, including new entry level Power Systems servers that offer better total cost of acquisition (TCA) and total cost of ownership (TCO) than comparable competitive Intel Corp. x86-based servers. Meanwhile, the new 2013 Dice Holdings Inc. Tech Salary Survey finds technology professionals enjoyed the biggest pay raise in a decade last year.

Focal Points:

- Cisco reported its fiscal second quarter revenues rose five percent to $12.1 billion versus the previous year's quarter. Net income on a GAAP basis increased 6.2 percent to $2.7 billion. The company's data center business grew 65 percent compared with the previous year, while its wireless business and service provider video offerings gained 27 and 20 percent, respectively. However, Cisco's core router and switching business did not fare as well, with the router business shrinking six percent and the switching revenues only climbing three percent. EMEA revenues shrank six percent year-over-year while the Americas and Asia Pacific climbed two and three percent, respectively. CEO Chambers warned the overall picture was mixed with parts of Europe remaining very challenging. However, he stated there are early signs of stabilization in government spending and also in probably a little bit over two thirds of Europe. While there is cautious optimism, there is little tangible evidence that Cisco has turned the corner.

- IBM's Systems and Technology Group launched a number of systems and solutions across its product lines, including new PureSystems solutions, on February 5. As part of the announcement was more affordable, more powerful Power Systems servers designed to aggressively take on Dell Inc., Hewlett-Packard Co. (HP), and Oracle Corp. The upgraded servers are based upon the POWER7+ microprocessors and have a starting price as low as $5,947 for the Power Express 710. IBM stated the 710 and 730 are competitively priced against HP's Integrity servers and Oracle's Sparc servers while the PowerLinux 7R1 and 7R2 servers are very aggressively priced to garner market share from x86 servers.

- Dice, a job search site for engineering and technology professionals, recently released its 2013 Tech Salary Survey. Amongst its key findings was that technology salaries saw the biggest year-over-year salary jump in over a decade, with the average salary increasing 5.3 percent. Additionally, 64 percent of 15,049 surveyed in late 2012 are confident they can find favorable new positions, if desired. Scot Melland, CEO of Dice Holdings, stated companies will now have to either pay to recruit or pay to retain and today, companies are doing both for IT professionals. The top reasons for changing jobs were greater compensation (67 percent), better working conditions (47 percent) and more responsibility (36 percent). David Foote, chief analyst at Foote Partners LLC, finds IT jobs have been on a "strong and sustained growth run" since February 2012. By Foote Partners' calculations, January IT employment showed its largest monthly increase in five years. Foote believes the momentum is so powerful that it is likely to continue barring a severe and deep falloff in the general economy or a catastrophic event. Based on Bureau of Labor Statistics (BLS) data, Foote estimates a gain of 22,100 jobs in January across four IT-related job sectors, whereas the average monthly employment gains from October to December 2012 were 9,700.

RFG POV: While the global economic outlook appears a little brighter than last year, indications are it may not last. Executives will have to carefully manage spending; however, with the need to increase salaries to retain talent this year, extra caution must be undertaken in other spending areas. IT executives should consider leasing IT equipment, software and services for all new acquisitions. This will help to preserve capital while allowing IT to move forward aggressively on innovation, enhancement and transformation projects. RFG studies find 36 to 40 month hardware and software leases are optimum and can be less expensive than purchasing or financing, even over a five year period. Moreover, IBM's new entry level Power Systems servers are another game-changer. An RFG study found that the three-year TCA for similarly configured x86 systems handling the same workload as the POWER7+ systems can be up to 75 percent more expensive while the TCO of the x86 servers can be up to 65 percent more expensive. Furthermore, the cost advantage of the Power Systems could even be greater if one included the cost of development systems, application software and downtime impacts. IT executives should reevaluate its standards for platform selection based upon cost, performance, service levels and workload and not automatically assume that x86 servers are the IT processing answer to all business needs.

Mainframe Survey – Future is Bright

Lead Analyst: Cal Braunstein

According to the 2012 BMC Software Inc. survey of mainframe users, the mainframe continues to be their platform of choice due to its superior availability, security, centralized data serving and performance capabilities. It will continue to be a critical business tool that will grow driven by the velocity, volume, and variety of applications and data.

Focal Points:

- According to 90 percent of the 1,243 survey respondents the mainframe is considered to be a long-term solution, and 50 percent of all respondents agreed it will attract new workloads. Asia-Pacific users reported the strongest outlook, as 57 percent expect to rely on the mainframe for new workloads. The top three IT priorities for respondents were keeping IT costs down, disaster recovery, and application modernization. The top priority, keeping costs down, was identified by 69 percent of those surveyed, up from 60 percent from 2011. Disaster recovery was unchanged at 34 percent while application modernization was selected by 30 percent, virtually unchanged as well. Although availability is considered a top benefit of the mainframe, 39 percent of respondents reported an unplanned outage; however, only 10 percent of organizations stated they experienced any impact from an outage. The primary causes of outages were hardware failures (31 percent), system software failure (30 percent), in-house application failure (28 percent), and change process failure (22 percent).

- 59 percent of respondents expect MIPS capacity to grow as they modernize and add applications to address business needs. The top four factors for continued investment in the mainframe were platform availability advantage (74 percent), security strengths (7o percent), superior centralized data server (68 percent), and transaction throughput requirements best suited to a mainframe (65 percent). Only 29 percent felt that the costs of migration were too high or use of alternative solutions did not have a reasonable return on investment (ROI), up from 26 percent the previous two years.

- There remains a continued concern about the shortage of skilled mainframe staff. Only about a third of respondents were very concerned about the skills issues, although at least 75 percent of those surveyed expressed some level of concern. The top methods being used to address the skills shortage are training internally (53 percent), hire experienced staff (40 percent), outsource (37 percent) and automation (29 percent). Additionally, more than half of the respondents stated the mainframe must be incorporated into the enterprise management processes. Enterprises are recognizing the growing complexity of the hybrid data center and the need for simple, cross-platform solutions.

RFG POV: Some things never change – mainframes still are predominant in certain sectors and will continue to be so over the visible horizon, and yet the staffing challenges linger. 20 years after mainframes were declared dinosaurs they remain valuable platforms and growing. In fact, mainframes can be the best choice for certain applications and data serving, as they effectively and efficiently deal with the variety, velocity, veracity, volume, and vulnerability of applications and data while reducing complexity and cost. RFG's latest study on System z as the lowest cost database server (http://lnkd.in/ajiUrY ) shows the use of the mainframe can cut the costs of IT operations around 50 percent. However, with Baby Boomers becoming eligible for retirement, there is a greater concern and need for IT executives to utilize more automated, self-learning software and implement better recruitment, training and outsourcing programs. IT executives should evaluate mainframes as the target server platform for clouds, secure data serving, and other environments where zEnterprise's heterogeneous server ecosystem can be used to share data from a single source, and optimize capacity and performance at a low-cost.

Progress – Slow Going

Lead Analyst: Cal Braunstein

According to Uptime Institute's recently released 2012 Data Center Industry Survey, enterprises are lukewarm about sustainability whereas a report released by MeriTalk finds federal executives see IT as a cost and not as part of the solution. In other news, the latest IQNavigator Inc. temporary worker index shows temporary labor rates are slowly rising in the U.S.

Focal Points:

- According to Uptime Institute's recently released 2012 Data Center Industry Survey, more than half of the enterprise respondents stated energy savings were important but few have financial incentives in place to drive change. Only 20 percent of the organizations' IT departments pay the data center power bill; corporate real estate or facilities is the primary payee. In Asia it is worse: only 10 percent of IT departments pay for power. When it comes to an interest in pursuing a green certification for current or future data centers, slightly less than 50 percent were interested. 29 percent of organizations do not measure power usage effectiveness (PUE); for environments with 500 servers or less, nearly half do not measure PUE. Of those that do, more precise measurement methods are being employed this year over last. The average global, self-reported PUE from the survey was between 1.8 and 1.89. Nine percent of the respondents reported a PUE of 2.5 or greater while 10 percent claimed a PUE of 1.39 or less. Precision cooling strategies are improving but there remains a long way to go. Almost one-third of respondents monitor temperatures at the room level while only 16 percent check it at the most relevant location: the server inlet. Only one-third of respondents cited their firms have adopted tools to identify underutilized servers and devices.

- A survey of 279 non-IT federal executives by MeriTalk, an online community and resource for government IT, finds more than half of the respondents said their top priorities include streamlining business processes. Nearly 40 percent of the executives cited cutting waste as their most important mission, and 32 percent said increasing accountability placed first on their to-do list. Moreover, less than half of the executives think of IT as an opportunity versus a cost while 56 percent stated IT helps support their daily operations. Even worse, less than 25 percent of the executives feel IT lends them a hand in providing analytics to support business decisions, saving money and increasing efficiency, or improving constituent processes or services. On the other hand, 95 percent of federal executives agree their agency could see substantial savings with IT modernization.

- IQNavigator, a contingent workforce software and managed service provider, released its second quarter 2012 temporary worker rate change index for the U.S. Overall, the national rate trend for 2012 has been slowly rising and now sits five percentage points above the January 2008 baseline. However, the detail breakdown shows no growth in the professional-management job sector but movement from negative to 1.2 percent positive in the technical-IT sector. Since the rate of increase over the past six months remains less than the inflation rate over the same period, the company feels it is unclear whether or not the trend implies upwards pressure on labor rates. The firm also points out that the U.S. Bureau of Labor Statistics (BOL) underscores the importance of temporary labor as new hires increasingly are being made through temporary employment agencies. In fact, although temporary agency employees constitute less than two percent of the total U.S. non-farm labor force, 15 percent of all new jobs created in the U.S. in 2012 have been through temp agency placements.

RFG POV: Company executives may vocalize their support for sustainability but most have not established financial incentives designed to drive a transformation of their data centers to be best of breed "green IT" shops. Executives still fail to recognize that being green is not just good for the environment but it mobilizes the company to optimize resources and pursue best practices. Businesses continue to waste up to 40 percent of their IT budgets because they fail to connect the dots. Furthermore, the MeriTalk federal study reveals how far behind the private sector the U.S. federal government is. While businesses are utilizing IT as a differentiator to attain their goals, drive revenues and cut costs, the government perceives IT only as a cost center. Federal executives should modify their business processes, align and link their development projects to their operations, and fund their operations holistically. This will eliminate the sub-optimization and propel the transformation of U.S. government IT more rapidly. With the global and U.S. economies remaining weak over the mid- to long-term, the use of contingent workforce will expand. Enterprises do not like to make long-term investments in personnel when the business and regulatory climate is not friendly to growth. Hence, contingent workforce – domestic or overseas – will pick up the slack. IT executives should utilize a balanced approach with a broad range of workforce strategies to achieve agility and flexibility while ensuring business continuity, corporate knowledge, and management and technical control are properly addressed.

Gray Clouds on the Horizon

Lead Analyst: Cal Braunstein

According to two recent studies global IT spending is slowing while cloud adoption (excluding service providers) is occurring at a slower rate than projected. Elsewhere, according to a report released by outplacement firm Challenger, Gray & Christmas, layoffs in the technology sector for the first half of 2012 are at the highest levels seen in three years. Lastly, an Oracle Corp. big data survey finds companies are collecting more data than ever before but may be losing on average 14 percent of incremental revenue per year by not fully leveraging the information.

Focal Points:

- According to a new Gartner Inc. report, global IT spending percentage growth for 2012 is projected to be 3.0 percent, down from 2011 spending growth of 7.9 percent. The brightest spot in the analysis was that the telecom equipment category will grow by 10.8 percent – however that is down from 17.5 percent in the previous year. All the other categories – computer hardware, enterprise software, IT services, and telecom services – are growing slowly between 1.4 percent (telecom services) and 4.3 percent (enterprise software). The drop in spending is attributed to the global economic stresses – the eurozone crisis, weaker U.S. recovery, a slowdown in China, etc. For 2013 Gartner is projecting higher spending on hardware and software in the data center and on the desktop, better growth on telecom hardware (but down from 2012), and slightly higher spending on telecom services. In support of these projections is the latest Challenger, Gray report that shows during the first half of the year, 51,529 planned job cuts were announced across the tech sector. This represents a 260 percent increase over the 14,308 layoffs planned during the first half of 2011. Job cuts are so steep this year that the figure is 39 percent higher than all the job cuts recorded in the tech sector last year. Three tech companies are responsible for most of the job losses – Hewlett-Packard Co. (HP) announced it was slicing headcount by 30,000 and Nokia Corp. and Sony Corp. are each reducing staffing by 10,000. While the outplacement firm expected more cuts to be made over the course of the next six months, it does see bright spots in sectors of the business.

- According to Uptime Institute's recently released 2012 Data Center Industry Survey, cloud deployments have significantly increased globally over the past year. 25 percent of this year's respondents claimed they were adopting public clouds while another 30 percent said they were considering it. Additionally, 49 percent were moving to private clouds while another 37 percent were considering it. In 2011 only 16 percent of respondents stated they had deployed public clouds whereas 35 percent claimed they had deployed private clouds. 32 percent of large organizations use the public cloud, whereas 19 percent of small organizations and 10 percent of "traditional enterprises" employ public clouds. When it comes to private clouds, 65 percent of large organizations have claimed to have deployed private cloud but only 39 percent of small and mid-sized organizations were doing so. Public cloud adoption rates are 52 percent in Asia, 28 percent in Europe, and 22 percent in North America. Private cloud adoption rates are 42 percent in Asia, 52 percent in Europe, and 50 percent in the U.S. Cost savings and scalability were the top two reasons given for moving to the cloud while security was the major inhibitor for not adopting cloud computing (27 and 23 percent respectively), followed distantly by compliance and regulatory issues (64 and 27 percent respectively).

- Oracle announced the results of its big data study, in which 333 C-level executives from U.S. and Canadian enterprises were surveyed. The study examined the pain points that companies face regarding managing the deluge of data that organizations must deal with and how well they are using that information to drive profit and growth. 94 percent of respondents claimed growth with the biggest data growth areas in the areas of customer information (48 percent), operations (34 percent) and sales and marketing (33 percent). 29 percent of executives give their organization a "D" or "F" in preparedness to manage the data influx, while 93 percent of respondents believe their organization is losing revenue opportunities. The projected revenue loss for companies with revenues in excess of $1 billion is estimated to be approximately 13 percent of annual revenue from not fully leveraging the information. Most respondents are frustrated with their organizations' data gathering and distribution systems and almost all are looking to invest in improving information optimization. The communications industry is the most satisfied with its ability to deal with data – 20 percent gave their firms an "A." Executives in public sector, healthcare and utilities industries stated they were the least prepared to handle the data volumes and velocities. 41 percent of public sector executives, 40 percent of healthcare executives, and 39 percent of utilities executives rating themselves with either a "D" or "F" preparedness rating.

RFG POV: The global economic appears to be weak, with parts of Europe in or close to recession, Asia slowing rapidly, and the U.S. in weak positive territory. Economists see more storm clouds on the horizon – few see things improving in 2012. This will trickle down to IT budgets, with many companies requesting deferrals of capital spending and/or headcount growth. IT executives need to continue their push to slash operational expenditures through better resource optimization and improvements in best practices. RFG still finds a most IT executives pursue practices that are no longer valid, which results in up to 40 percent of operational expenditures being wasted. Cloud computing can assist enterprises in their quest to reduce costs but there are tradeoffs and they need to be understood before leaping into a cloud environment. Most corporate data is no longer an island and needs to be integrated with applications and systems that already exist. Thus, before moving to an off-premise cloud environment, IT executives should ensure that the cloud environment and the data are well integrated into existing systems and that the risk exposure is acceptable. There is no doubt that big data is coming and the volumes and velocity of change will only get worse as time marches on. The systems required to handle the increased influx of data may not look like those that exist in the data center today. It is conceivable that the big data and its incorporation into day-to-day operations could require an entirely new data center architecture. Business and IT executives should strategize on how to deliver on their goals and vision, and find a way to work together to transform their shops to address the new ways of conducting business and processing data while staying within budgetary constraints.

CEO Surveys

Lead Analyst: Cal Braunstein

IBM Corp. and PricewaterhouseCoopers, LLC released results from their global CEO surveys. For the first time the IBM study identified technology as the most important external force impacting the business. Both studies recognized the need to employ finer customer segmentation and use IT to change business processes to take advantage of new opportunities.

Focal Points:

- IBM released results of its fifth biennial CEO survey, in which the company interviewed more than 1700 CEOs with more than six years tenure in 64 countries. One of the top findings was that technology is now driving more organizational change than any other force, including the economy. In this regard, outperformers embrace openness, excel in executing tough changes, differentiate themselves through better data access and insights which are put into action, and are more likely to partner for innovation and driving revenues from new sources. The top three sources of sustained economic value come from human capital, customer relationships and products/services innovation. Internal and external collaboration are being used as tools for creating organizational change. The second finding was that CEOs create more economic value by engaging customers as individuals. These companies are investing in getting customers to share their insights into what they value individually, and when and how they want to interact. Then they develop and execute plans to interact using social media as well as traditional face-to-face engagements. Outperformers in this category strongly differentiate themselves through better data access, insight, and translation into actions. While big data is playing a role in this dimensional change, other factors are the old fashioned way of listening and capturing what employees see and hear, and then being where the customer expects the company to be.

- The third major CEO survey finding was that CEOs create more economic value by pursuing more disruptive innovation with partners and collaborating to drive new revenue sources. In some cases they create new industries while in others they merely move into new industries. Two of the keys to more effectively meeting the partnership challenge are making the partnerships personal and breaking the traditional collaboration boundaries. In effect, these companies are themselves becoming disruptors. While CEOs have shifted to address the transformational needs of the organization, CFOs are still concerned with controlling cost and improving efficiency. In a separate IBM retail study, IBM found most customers were willing to share information if they perceived there was a benefit to doing so. The greatest areas of reluctance for data sharing were in the categories of financial and medical data.

- The PwC study of more than 160 CEOs in the U.S. identified customer demand as the primary driver of corporate strategy in 2012. While the executives are slightly less optimistic than last year, 40 percent expect their own companies will grow and approximately the same number expect to complete a cross-border merger or acquisition this year. To deliver on their strategies CEOs are reconfiguring operations in local markets, nurturing talent and addressing potential talent shortages, and encouraging the free flow of ideas and innovations. Two significant findings in the survey were a major jump in the concern about competitive threats (73 percent of the CEOs are worried) and a considerable decline in anxiety about risk exposure (a 20 point drop to 19 percent). PwC notes that with more growth opportunities arising in distant lands business leaders are acknowledging that an overly conservative attitude will put their companies at a competitive disadvantage. Lastly, PwC determined that the top CEO priorities overseas are growing the customer base followed by access to the local talent base. Additionally, the PwC study confirmed one of the IBM findings – most CEOs were planning on entering into new strategic alliances or joint ventures this year.

RFG POV: CEOs are embracing the belief that true insights into customer wants and needs will generate additional revenues and loyalty, and customer needs may be better satisfied through acquisitions and partnerships than organic investment. CEOs are also recognizing technology must play a leading role in the areas of business analytics -- including big data collaboration and social media, and business process management. All this is driving cultural and organizational change. However, one of the perennial inhibitors to change is the human behavioral theory "culture eats process for lunch every day." Thus, business and IT executives must excel in executing tough cultural and process changes if they expect to convert their goals and strategies to reality. In that an Economist Intelligence Unit study found that 60 percent of executives believe their main vertical markets will be barely recognizable by 2020, it is imperative that the executives overcome their risk aversion and exposure concerns. Moreover, they must also step up to the challenge and provide the strong leadership required to transform the organization to meet the demands of tomorrow.