Dell: The Privatization Advantage

Lead Analyst: Cal Braunstein

RFG Perspective: The privatization of Dell Inc. closes a number of chapters for the company and puts it more firmly on a different course. The Dell of yesterday was primarily a consumer company with a commercial business, both with a transactional model. The new Dell is planned to be a commercial-oriented company with an interest in the consumer space. The commercial side of Dell will attempt to be relationship driven while the consumer side will retain its transactional model. The company has some solid products, channels, market share, and financials that can carry the company through the transition. However, it will take years before the new model is firmly in place and adopted by its employees and channels and competitors will not be sitting idly by. IT executives should expect Dell to pull through this and therefore should take advantage of the Dell business model and transitional opportunities as they arise.

Shareholders of IT giant Dell approved a $24.9bn privatization takeover bid from company founder and CEO Michael Dell, Silver Lake Partners, banks and a loan from Microsoft Corp. It was a hard fought battle with many twists and turns but the ownership uncertainty is now resolved. What remains an open question is was it worth it? Will the company and Michael Dell be able to change the vendor's business model and succeed in the niche that he has carved out?

Dell's New Vision

After the buyout Michael Dell spoke to analysts about his five-point plan for the new Dell:

- Extend Dell's presence in the enterprise sector through investments in research and development as well as acquisitions. Dell's enterprise solutions market is already a $25 billion business and it grew nine percent last quarter – at a time competitors struggled. According to the CEO Dell is number one in servers in the Americas and AP, ships more terabytes of storage than any competitor, and completed 1,300 mainframe migrations to Dell servers. (Worldwide IDC says Hewlett-Packard Co. (HP) is still in first place for server shipments by a hair.)

- Expand sales coverage and push more solutions through the Partner Direct channel. Dell has more than 133,000 channel partners globally, with about 4,000 certified as Preferred or Premier. Partners drive a major share of Dell's business.

- Target emerging markets. While Dell does not break out revenue numbers by geography, APJ and BRIC (Brazil, Russia, India and China) saw minor gains over the past quarter year-over-year but China was flat and Russia sales dropped by 33 percent.

- Invest in the PC market as well as in tablets and virtual computing. The company will not manufacture phones but will sell mobile solutions in other mobility areas. Interestingly, he said Dell is a commercial seller more than in the consumer space now when it comes to end user computing. This is a big shift from the old Dell and puts them in the same camp as HP. The company appears to be structuring a full-service model for commercial enterprises.

- "Accelerate an enhanced customer experience." Michael Dell stipulates that Dell will serve its customers with a single-minded purpose and drive innovations that will help them be more productive, grow, and achieve their goals.

Strengths, Weaknesses, Challenges and Competition

With the uncertainty over, Dell can now fully focus on execution of plans that were in place prior to the initial stalled buyout attempt. Financially Dell has sufficient funds to address its business needs and operates with a strong positive cash flow. Brian Gladden, Dell's CFO, said Dell was able to generate $22 billion in cash flow over the past five years and conceded the new Dell debt load would be under $20 billion. This should give the company plenty of room to maneuver.

In the last five quarters Dell has spent $5 billion in acquisitions and since 2007 when Michael Dell returned as CEO, it has paid more than $13.7 billion on acquisitions. Gladden said Dell will aim to reduce its debt, invest in enhanced and innovative product and services development, and buy other companies. However, the acquisitions will be of a "more complimentary" type rather than some of the expensive, big-bang deals Dell has done in the past.

The challenge for Dell financially will be to grow the enterprise segments faster than the end user computing markets collapse. As can be noted in the chart below, the enterprise offerings are less than 40 percent of the revenues currently and while they are growing nicely, the end user market is losing speed at a more rapid rate in terms of dollars.

Source: Dell's 2Q FY14 Performance Review

Dell also has a strong set of enterprise products and services. The server business does well and the company has positioned itself well in the hyperscale data center solution space where it has a dominant share of custom server sales. Unfortunately, margins are not as robust in that space as other parts of the server market. Moreover, the custom server market is one that fulfills the needs of cloud service providers and Dell will have to contend with "white box" providers and lower prices and shrinking margins going forward. Networking is doing well too but storage remains a soft spot. After dropping out as an EMC Corp. channel partner and betting on its own acquired storage companies, Dell lost ground and still struggles in the non-DAS space to gain the momentum needed. The mid-range EqualLogic and higher-end Compellent solutions, while good, have stiff competition and need to up their game if Dell is to become a full-service provider.

Software is growing but the base is too small at the moment. Nonetheless, this will prove to be an important sector for Dell going forward. With major acquisitions (such as Boomi, KACE, Quest Software and SonicWALL) and the top leadership of John Swainson, who has an excellent record of growing software companies, Dell software is poised to be an integral part of the new enterprise strategy. Meanwhile, its Services Group appears to be making modest gains, although its Infrastructure, Cloud, and Security services are resonating with customers. Overall, though, this needs to change if Dell is to move upstream and build relationship sales. In that the company traditionally has been transaction oriented, moving to a relationship model will be one of its major transformational initiatives. This process could easily take up to a decade before it is fully locked in and units work well together.

Michael Dell also stated "we stand on the cusp of the next technological revolution. The forces of big data, cloud, mobile, and security are changing the way people live, businesses operate, and the world works – just as the PC did almost 30 years ago." The new strategy addresses that shift but the End User Computing unit still derives most of its revenues from desktops, thin clients, software and peripherals. About 40 percent comes from mobility offerings but Dell has been losing ground here. The company will need to shore that up in order to maintain its growth and margin objectives.

While Dell transforms itself, its competitors will not be sitting still. HP is in the midst of its own makeover, has good products and market share but still suffers from morale and other challenges caused by the upheavals over the last few years. IBM Corp. maintains its version of the full-service business model but will likely take on Dell in selected markets where it can still get decent margins. Cisco Systems Inc. has been taking market share from all the server vendors and will be an aggressive challenger over the next few years as well. Hitachi Data Systems (HDS), EMC, and NetApp Inc. along with a number of smaller players will also test Dell in the non-DAS (direct attached server) market segments. It remains to be seen if Dell can fend them off and grow its revenues and market share.

Summary

Michael Dell and the management team have major challenges ahead as they attempt to change the business model, re-orient people's mindsets, develop innovative, efficient and affordable solutions, and fend off competitors while they slowly back away from the consumer market. Dell wants to be the infrastructure provider for cloud providers and enterprises of all types – "the BASF inside" in every company. It still intends to do this by becoming the top vendor of choice for end-to-end IT solutions and services. As the company still has much work to do in creating a stronger customer relationship sales process, Dell will have to walk some fine lines while it figures out how to create the best practices for its new model. Privatization enables Dell to deal with these issues more easily without public scrutiny and sniping over margins, profits, revenues and strategies.

RFG POV: Dell will not be fading away in the foreseeable future. It may not be so evident in the consumer space but in the commercial markets privatization will allow it to push harder to remain or be one of the top three providers in each of the segments it plays in. The biggest unknown is its ability to convert to a relationship management model and provide a level of service that keeps clients wanting to spend more of their IT dollars with Dell and not the competition. IT executives should be confident that Dell will remain a reliable, long-term supplier of IT hardware, software and services. Therefore, where appropriate, IT executives should consider Dell for its short list of providers for infrastructure products and services, and increasingly for software solutions related to management of big data, cloud and mobility environments.

The Future of NAND Flash; the End of Hard Disk Drives?

Lead Analyst: Gary MacFadden

RFG POV: In the relatively short and fast-paced history of data storage, the buzz around NAND Flash has never been louder, the product innovation from manufacturers and solution providers never more electric. Thanks to mega-computing trends, including analytics, big data, cloud and mobile computing, along with software-defined storage and the consumerization of IT, the demand for faster, cheaper, more reliable, manageable, higher capacity and more compact Flash has never been greater. But how long will the party last?

In this modern era of computing, the art of dispensing predictions, uncovering trends and revealing futures is de rigueur. To quote that well-known trendsetter and fashionista, Cher, "In this business, it takes time to be really good – and by that time, you're obsolete." While meant for another industry, Cher's ruminations seem just as appropriate for the data storage space.

At a time when industry pundits and Flash solution insiders are predicting the end of mass data storage as we have known it for more than 50 years, namely the mechanical hard disk drive (HDD), storage futurists, engineers and computer scientists are paving the way for the next generation of storage beyond NAND Flash – even before Flash has had a chance to become a mature, trusted, reliable, highly available and ubiquitous enterprise class solution. Perhaps we should take a breath before we trumpet the end of the HDD era or proclaim NAND Flash as the data storage savior of the moment.

Short History of Flash

Flash has been commercially available since its invention and introduction by Toshiba in the late 1980s. NAND Flash is known for being at least an order of magnitude faster than HDDs and has no moving parts (it uses non-volatile memory or NVM) and therefore requires far less power. NAND Flash is found in billions of personal devices, from mobile phones, tablets, laptops, cameras and even thumb drives (USBs) and over the last decade it has become more powerful, compact and reliable as prices have dropped, making enterprise-class Flash deployments much more attractive.

At the same time, IOPS-hungry applications such as database queries, OLTP (online transaction processing) and analytics have pushed traditional HDDs to the limit of the technology. To maintain performance measured in IOPS or read/write speeds, enterprise IT shops have employed a number of HDD workarounds such as short stroking, thin provisioning and tiering. While HDDs can still meet the performance requirements of most enterprise-class applications, organizations pay a huge penalty in additional power consumption, data center real estate (it takes 10 or more high-performance HDDs to match the same performance of the slowest enterprise-class Flash or solid-state storage drive (SSD)) and additional administrator, storage and associated equipment costs.

Flash is becoming pervasive throughout the compute cycle. It is now found on DIMM (dual inline memory module) memory cards to help solve the in-memory data persistence problem and improve latency. There are Flash cache appliances that sit between the server and a traditional storage pool to help boost access times to data residing on HDDs as well as server-side Flash or SSDs, and all-Flash arrays that fit into the SAN (storage area network) storage fabric or can even replace smaller, sub-petabyte, HDD-based SANs altogether.

There are at least three different grades of Flash drives, starting with the top-performing, longest-lasting – and most expensive – SLC (single level cell) Flash, followed by MLC (multi-level cell), which doubles the amount of data or electrical charges per cell, and even TLC for triple. As Flash manufacturers continue to push the envelope on Flash drive capacity, the individual cells have gotten smaller; now they are below 20 nm (one nanometer is a billionth of a meter) in width, or tinier than a human virus at roughly 30-50 nm.

Each cell can only hold a finite amount of charges or writes and erasures (measured in TBW, or total bytes written) before its performance starts to degrade. This program/erase, or P/E, cycle for SSDs and Flash causes the drives to wear out because the oxide layer that stores its binary data degrades with every electrical charge. However, Flash management software that utilizes striping across drives, garbage collection and wear-leveling to distribute data evenly across the drive increases longevity.

Honey, I Shrunk the Flash!

As the cells get thinner, below 20 nm, more bit errors occur. New 3D architectures announced and discussed at FMS by a number of vendors hold the promise of replacing the traditional NAND Flash floating gate architecture. Samsung, for instance, announced the availability of its 3D V-NAND, which leverages a Charge Trap Flash (CTF) technology that replaces the traditional floating gate architecture to help prevent interference between neighboring cells and improve performance, capacity and longevity.

Samsung claims the V-NAND offers an "increase of a minimum of 2X to a maximum 10X higher reliability, but also twice the write performance over conventional 10nm-class floating gate NAND flash memory." If 3D Flash proves successful, it is possible that the cells can be shrunk to the sub-2nm size, which would be equivalent to the width of a double-helix DNA strand.

Enterprise Flash Futures and Beyond

Flash appears headed for use in every part of the server and storage fabric, from DIMM to server cache and storage cache and as a replacement for HDD across the board – perhaps even as an alternative to tape backup. The advantages of Flash are many, including higher performance, smaller data center footprint and reduced power, admin and storage management software costs.

As Flash prices continue to drop concomitant with capacity increases, reliability improvements and drive longevity – which today already exceeds the longevity of mechanical-based HDD drives for the vast number of applications – the argument for Flash, or tiers of Flash (SLC, MLC, TLC), replacing HDD is compelling. The big question for NAND Flash is not: when will all Tier 1 apps be running Flash at the server and storage layers; but rather: when will Tier 2 and even archived data be stored on all-Flash solutions?

Much of the answer resides in the growing demands for speed and data accessibility as business use cases evolve to take advantage of higher compute performance capabilities. The old adage that 90%-plus of data that is more than two weeks old rarely, if ever, gets accessed no longer applies. In the healthcare ecosystem, for example, longitudinal or historical electronic patient records now go back decades, and pharmaceutical companies are required to keep clinical trial data for 50 years or more.

Pharmacological data scientists, clinical informatics specialists, hospital administrators, health insurance actuaries and a growing number of physicians regularly plumb the depths of healthcare-related Big Data that is both newly created and perhaps 30 years or more in the making. Other industries, including banking, energy, government, legal, manufacturing, retail and telecom are all deriving value from historical data mixed with other data sources, including real-time streaming data and sentiment data.

All data may not be useful or meaningful, but that hasn't stopped business users from including all potentially valuable data in their searches and their queries. More data is apparently better, and faster is almost always preferred, especially for analytics, database and OLTP applications. Even backup windows shrink, and recovery times and other batch jobs often run much faster with Flash.

What Replaces DRAM and Flash?

Meanwhile, engineers and scientists are working hard on replacements for DRAM (dynamic random-access memory) and Flash, introducing MRAM (magneto resistive), PRAM (phase-change), SRAM (static) and RRAM – among others – to the compute lexicon. RRAM or ReRAM (resistive random-access memory) could replace DRAM and Flash, which both use electrical charges to store data. RRAM uses "resistance" to store each bit of information. According to wiseGEEK "The resistance is changed using voltage and, also being a non-volatile memory type, the data remain intact even when no energy is being applied. Each component involved in switching is located in between two electrodes and the features of the memory chip are sub-microscopic. Very small increments of power are needed to store data on RRAM."

According to Wikipedia, RRAM or ReRAM "has the potential to become the front runner among other non-volatile memories. Compared to PRAM, ReRAM operates at a faster timescale (switching time can be less than 10 ns), while compared to MRAM, it has a simpler, smaller cell structure (less than 8F² MIM stack). There is a type of vertical 1D1R (one diode, one resistive switching device) integration used for crossbar memory structure to reduce the unit cell size to 4F² (F is the feature dimension). Compared to flash memory and racetrack memory, a lower voltage is sufficient and hence it can be used in low power applications."

Then there's Atomic Storage which ostensibly is a nanotechnology that IBM scientists and others are working on today. The approach is to see if it is possible to store a bit of data on a single atom. To put that in perspective, a single grain of sand contains billions of atoms. IBM is also working on Racetrack memory which is a type of non-volatile memory that holds the promise of being able to store 100 times the capacity of current SSDs.

Flash Lives Everywhere! … for Now

Just as paper and computer tape drives continue to remain relevant and useful, HDD will remain in favor for certain applications, such as sequential processing workloads or when massive, multi-petabyte data capacity is required. And lest we forget, HDD manufacturers continue to improve the speed, density and cost equation for mechanical drives. Also, 90% of data storage manufactured today is still HDD, so it will take a while for Flash to outsell HDD and even for Flash management software to reach the level of sophistication found in traditional storage management solutions.

That said, there are Flash proponents that can't wait for the changeover to happen and don't want or need Flash to reach parity with HDD on features and functionality. One of the most talked about Keynote presentations at last August's Flash Memory Summit (FMS) was given by Facebook's Jason Taylor, Ph.D., Director of Infrastructure and Capacity Engineering and Analysis. Facebook and Dr. Taylor's point of view is: "We need WORM or Cold Flash. Make the worst Flash possible – just make it dense and cheap, long writes, low endurance and lower IOPS per TB are all ok."

Other presenters, including the CEO of Violin Memory, Don Basile, and CEO Scott Dietzen of Pure Storage, made relatively bold predictions about when Flash would take over the compute world. Basile showed a 2020 Predictions slide in his deck that stated: "All active data will be in memory." Basile anticipates "everything" (all data) will be in memory within 7 years (except for archive data on disk). Meanwhile, Dietzen is an articulate advocate for all-Flash storage solutions because "hybrids (arrays with Flash and HDD) don't disrupt performance. They run at about half the speed of all-Flash arrays on I/O-bound workloads." Dietzen also suggests that with compression and data deduplication capabilities, Flash has reached or dramatically improved on cost parity with spinning disk.

Bottom Line

NAND Flash has definitively demonstrated its value for mainstream enterprise performance-centric application workloads. When, how and if Flash replaces HDD as the dominant media in the data storage stack remains to be seen. Perhaps some new technology will leapfrog over Flash and signal its demise before it has time to really mature.

For now, HDD is not going anywhere, as it represents over $30 billion of new sales in the $50-billion-plus total storage market – not to mention the enormous investment that enterprises have in spinning storage media that will not be replaced overnight. But Flash is gaining, and users and their IOPS-intensive apps want faster, cheaper, more scalable and manageable alternatives to HDD.

RFG POV: At least for the next five to seven years, Flash and its adherents can celebrate the many benefits of Flash over HDD. IT executives should recognize that for the vast majority of performance-centric workloads, Flash is much faster, lasts longer and costs less than traditional spinning disk storage. And Flash vendors already have their sights set on Tier 2 apps, such as email, and Tier 3 archival applications. Fast, reliable and inexpensive is tough to beat and for now Flash is the future.

Focusing the Health Care Lens

Lead Analyst: Maria DeGiglio

RFG Perspective: There are a myriad of components, participants, issues, and challenges that define health care in the United States today. To this end, we have identified five main components of health care: participants, regulation, cost, access to/provisioning of care, and technology – all of which intersect at many points. Health care executives -- whether payers, providers, regulators, or vendors – must understand these interrelationships, and how they continue to evolve, so as to proactively address them in their respective organizations in order to remain competitive.

This blog will discuss some key interrelationships among the aforementioned components and tease out some of the complexities inherent in the dependencies and co-dependences in the health care system and their effect on health care organizations.

The Three-Legged Stool:

One way to examine the health care system in the United States is through the interrelationship and interdependence among access to care, quality of care, and cost of care. If either access, quality, or cost is removed, the relationship (i.e., the stool) collapses. Let's examine each component.

Access to health care comprises several factors including having the ability to pay (e.g., through insurance and/or out of pocket) a health care facility that meets the health care need of the patient, transportation to and from that facility, and whatever post discharge orders must be filled (e.g., rehabilitation, pharmacy, etc.).

Quality of care includes, but is not limited to, a health care facility or physician's office that employs medical people with the skills to effectively diagnose and treat the specific health care condition realistically and satisfactorily. This means without error, without causing harm to the patient, and/or requiring the patient to make copious visits because the clinical talent is unable to correctly diagnose and treat the condition.

Lastly, cost of care comprises multiple sources including:

• Payment (possibly from several sources) for services rendered

• Insurance assignment (what the clinical entity agrees to accept from an insurance company whose insurance it accepts)

• Government reimbursement

• Tax write-offs

• The costs incurred by the clinical entity that delivers health care.

As previously mentioned, in this model, if one component or "leg" is removed the "stool" collapses.

If a patient has access to care and the means to pay, but the quality of care is sub-standard or even harmful resulting in further suffering or even death, the health care system has failed the patient.

If the patient has access to quality care, but is unable to afford it either because he/she lacks insurance or cannot pay out-of-pocket costs, then the system has once again failed the patient. It is important to note that because of the Federal Emergency Medical Treatment and Labor Act (EMTALA) an Emergency Department (ED) must evaluate a patient and if emergent treatment is required, the patient must be stabilized. However, the patient will then receive a bill for the full fees for service – not the discounted rates health care providers negotiate with insurance companies.

In the third scenario, if the patient lacks access to care because of distance, disability, or other transportation issues (this excludes ambulance), the system has again failed the patient because he/she cannot get to a place where he/she can get the necessary care (e.g., daily physical therapy, etc.).

This example of the interrelationship among access, quality, and cost underscores the fragile ecology of the health care system in the United States today and is call to action to payers, providers, and regulators to provide oversight and governance as well as transparency. Health care vendors affect and are affected by the interrelationship among access, quality, and cost. Prohibitive costs for payers and providers affect sales of vendor products and services or force vendors to dilute their offerings. Health care vendors can positively affect quality of health care that is provided by offering products to enable provider organizations to proactively oversee, trouble shoot, and remediate quality issues. They can affect cost as well by providing products and services that are not only compliant in the present but will continue to remain compliant as the policies change because there are both hard and soft dollar savings to providers.

Managing the Information, Not the Cost:

An example of what can sometimes be a paradoxical health care system interrelationship is that between the process of providing care and the actual efficient provisioning of quality care.

While most health care providers comply with the federal mandate to adopt electronic medical records by 2014, many are still struggling with manual processes, information silos, and issues of interconnectivity among disparate providers and payers. There is also the paradox of hospitals steadily closing their doors over the last 25 plus years and Emergency Departments (EDs) that continue to be crammed full of patients who must sometimes wait inordinate amounts of time to be triaged, treated, and admitted/discharged.

One barrier to prompt triage and treatment in an emergency department is process inefficiencies (or lack of qualified medical personnel). Take the example of a of a dying patient struggling to produce proof of insurance to the emergency department registrar – the gatekeeper to diagnosis and treatment – before collapsing dead on the hospital floor.

But the process goes beyond just proof of insurance and performing the intake. It extends to the ability to:

• Access existing electronic medical history

• Triage the patient, order labs, imaging, and/or other tests

• Compile results

• Make a correct diagnosis

• Correctly treat the patient

• Comply with federal/state regulations.

A breakdown in any of these steps in the process can negatively affect the health and well-being of the patient – and the reputation of the hospital.

Some providers have taken a hard look at their systems and streamlined and automated them as well as created more efficient workflow processes. These providers have been effective in both delivering prompt care and reducing both costs and patient grievances/complaints. One health care executive indicates that he advises his staff to manage the information rather than the money because the longer it takes to register a patient, triage that patient, refer him/her to a program, get him/her into the correct program, ensure the patient remains until treated, bill the correct payer, and get paid, the more money is lost.

The provisioning of satisfactory health care is related to both provider and payer process and workflow. By removing inefficiencies and waste and moving toward streamlining and standardizing processes and automating workflows health care provider executives will likely provide patients with better access to quality medical care that at reduced cost for their organizations.

The Letter of the Law:

Another tenuous interrelationship is among the law (specifically Health Insurance Portability and Accountability Act of 1996 (HIPAA)) and the enforcement thereof, technology (i.e., treatment of electronic medical records), and how provider organizations protect private health information (PHI) – or don't. Two incidents that made national news are discussed in the New York Times article by Milt Freudenheim, Robert Pear (2006) entitled "Health Hazard: Computers Spilling Your History." The two incidents:

(1) Former President Bill Clinton, who was admitted to New York-Presbyterian Hospital for heart surgery. (Hackers including hospital staff were trying to access President Clinton's electronic medical records and his patient care plan.)

(2) Nixzmary Brown, the seven-year-old who was beaten to death by her stepfather. (According to the Times, the New York City public hospital system reported that "dozens" of employees at one of its Brooklyn medical centers had illegally accessed Nixzmary's electronic medical records.)

These two incidents, and there have certainly been many more, illustrate the tenuous interrelationship among a law that was passed, in part, to protect private health information, abuses that have been perpetrated, and the responsibility of health care organizations to their patients right to privacy and confidentiality.

Progress has been and is being made with:

• More stringent self-policing and punitive measures

• Use of more sophisticated applications to track staff member log-ons and only permitting staff who have direct contact with a patient to see that patient's electronic medical records

• Hiring, or promoting from within, IT compliance officers who understand the business, the law, and technology to ensure that patient information is handled in a compliant manner within health care facilities' walls as well as preventing outside breaches.

Compliance with privacy laws is dependent upon being able to enforce those laws, and having processes and technology in place that detect, identify, report, and prevent abuses. Technology is far ahead of the laws and policies that govern it. Moreover, the creation of law does not always go hand-in-hand with its enforcement. Health care technology vendors must work with their provider customers to better understand their environments and to craft products that enable health care providers to safeguard PHI and remain compliant. Health care regulators must continuously address how to regulate new and emerging technologies as well as how to enforce them.

Summary

The above are just three examples of the myriad interrelationships among the aforementioned health care components. It is clear that no one element stands alone and that all are interconnected, many in innumerable ways. It also underscores the fact that health care executives, whether payers, providers, vendors, etc. must understand these interrelationships and how they can help/harm their respective organizations - and patients.

RFG POV: Health care executives are challenged to develop and deliver solutions even though the state of the industry is in flux and the risk of missing the mark can be high. Therefore, executives should continuously ferret out the changing requirements, understand applicability, and find ways to strengthen existing, and forge new, interrelationships and solution offerings. To minimize risks executives need to create flexible processes and agile, modular solutions that can be easily adjusted to meet the latest marketplace demands.

Decision-Making Bias – What, Me Worry?

RFG POV: The results of decision-making bias can come back to bite you. Decision-making bias exists and is challenging to eliminate! In my last post, I discussed the thesis put forward by Nobel Laureate Daniel Kahneman and co-authors Dan Lovallo, and Olivier Sibony in their June 2011 Harvard Business Review (HBR) article entitled "Before You Make That Big Decision…". With the right HBR subscription you can read the original article here. Executives must find and neutralize decision-making bias.

The authors discuss the impossibility of identifying and eliminating decision-making bias in ourselves, but leave open the door to finding decision-making bias in our processes and in our organization. Even better, beyond detecting bias you may be able to compensate for it and make sounder decisions as a result. Kahneman and his McKinsey and Co. co-authors state:

We may not be able to control our own intuition, but we can apply rational thought to detect others' faulty intuition and improve their judgment.

Take a systematic approach to decision-making bias detection and correction

The authors suggest a systematic approach to detecting decision-making bias. They distill their thinking into a dozen rules to apply when taking important decisions based on recommendations of others. The authors are not alone in their thinking!

In an earlier post on critical thinking, I mentioned the Baloney Detection Kit. You can find the "Baloney Detection Kit" for grown-ups from the Richard Dawkins Foundation for Reason and Science and Skeptic Magazine editor Dr. Michael Shermer on the Brainpickings.org website, along with a great video on the subject.

Decision-making Bias Detection and Baloney Detection

How similar are Decision-bias Detection and Baloney Detection? You can judge for yourself by looking at the table following. I’ve put each list in the order that it was originally presented, and made no attempt to cross-reference the entries. Yet it is easy to see the common threads of skepticism and inquiry. It is all about asking good questions, and anticipating familiar patterns of biased thought. Of course, basing the analysis on good quality data is critical!

Decision-Bias Detection and Baloney Detection Side by Side

|

Decision-Bias Detection

|

Baloney Detection

|

|---|---|

| Is there any reason to suspect errors driven by your team's self-interest? | How reliable is the source of the claim |

| Have the people making the decision fallen in love with it? | Does the source of the claim make similar claims? |

| Were there any dissenting opinions on the team? | Have the claims been verified by someone else (other than the claimant?) |

| Could the diagnosis of the situation be overly influenced by salient analogies? | Does this claim fit with the way the world works? |

| Have credible alternatives been considered? | Has anyone tried to disprove the claim? |

| If you had to make this decision again in a year, what information would you want, and can you get more of it now? | Where does the preponderance of the evidence point? |

| Do you know where the numbers came from | Is the claimant playing by the rules of science? |

| Can you see the "Halo" effect? (the story seems simpler and more emotional than it really is." | Is the claimant providing positive evidence? |

| Are the people making the recommendation overly attached to past decisions? | Does the new theory account for as many phenomena as the old theory? |

| Is the base case overly optimistic? | Are personal beliefs driving the claim? |

| Is the worst case bad enough? | ---------------------------- |

| Is the recommending team overly cautious? | ---------------------------- |

Conclusion

While I have blogged about the negative business outcomes due to poor data quality, good quality data alone will not save you from the decision-making bias of your recommendations team. When good-quality data is miss-applied or miss-interpreted, absent from the decision-making process, or ignored due to personal "gut" feelings, decision-making bias is right there, ready to bite you Stay alert, and stay skeptical!

reprinted by permission of Stu Selip, Principal Consulting LLC

Cisco, Dell and Economic Impacts

Lead Analyst: Cal Braunstein

Cisco Systems Inc. reported respectable financial results for its fourth quarter and full year 2013 but plans on layoffs nonetheless. Meanwhile, Dell Inc. announced flat second quarter 2104 results, with gains in its enterprise solutions and services that were offset by declines in end user computing revenues. In other news, recession has ended in the EU but U.S. and global growth weak.

Focal Points:

• Cisco, a bellwether for IT and the global economy overall, delivered decent fourth quarter and fiscal year 2013 financial results. For the quarter Cisco had revenues of $12.4 billion, an increase of 6.2 percent over the previous year's quarter. Net income on a GAAP basis was $2.3 billion for the quarter, an 18 percent jump year-over-year. For the full fiscal year Cisco reported revenues of $48.6 billion, up 5.5 percent from the prior year while net income for the year on a GAAP basis was $10.0 billion, a 24 percent leap from the 2012 fiscal year. The company plans on laying off 4,000 employees – or about five percent of its workforce – beginning this quarter due to economic uncertainty. According to CEO John Chambers the economy is "more mixed and unpredictable than I have ever seen it." While he sees growth in the public sector for a change, slow growth in the BRIC (Brazil, Russia, India, China) nations, EU, and U.S. are creating headwinds, he claims. On the positive front, Chambers asserts Cisco is number one in clouds and major movements in mobility and the "Internet of everything" will enable Cisco to maintain its growth momentum.

• Dell's second quarter revenues were $14.5 billion, virtually unchanged from the previous year's quarter. Net income on a GAAP basis was $433 million, a drop of 51 percent year-over-year. The Enterprise Solutions Group (ESG) achieved an eight percent year-over-year growth while the Services unit grew slightly and the predominant End User Computing unit shrank by five percent. The storage component of ESG declined by 7 percent and is now only 13 percent of the group's revenues. The server, networking, and peripherals component of ESG increased its revenues by 10 percent, with servers doing well and networking up 19 percent year-over-year. Dell claims its differentiated strategy includes a superior relationship model but that has not translated to increased services revenues. Dell's desktop and thin client revenues were flat year-over-year while mobility revenues slumped 10 percent and software and peripherals slid five percent in the same period. From a geographic perspective, the only newsworthy revenue gains or losses occurred in the BRIC countries. Brazil and India were up seven and six percent, respectively, while China was flat and Russia revenue collapsed by 33 percent from the previous year's quarter.

• Second quarter saw an improved outlook in Europe. It was reported that Eurozone GDP rose 0.3 percent, the first positive-territory reading for the Union since late 2011. Portugal surprised everyone with a 1.1 percent GDP jump while France and Germany grew 0.5 percent and 0.7 percent, respectively. Meanwhile, the U.S. economy grew 1.7 percent in the second quarter. According to the revisions, U.S. GDP growth nearly stalled at 0.1 percent in the fourth quarter of 2012, and rose 1.1 percent in the first quarter of this year (versus the estimated 1.8 percent). Additionally, venture capital has slipped 7 percent this year.

RFG POV: Cisco's financial results and actions can be viewed as a beacon of what businesses and IT executives can expect to encounter over the near term. The economic indicators are hinting at a change of leadership and potential problems ahead in some unexpected areas. The BRIC nations are running into headwinds that may not abate soon but may even get much worse, according to some economists. The U.S. GDP growth is flattening again for the third straight second half of the year and the EU, while improving, has a long way to go before it becomes healthy. This does not bode well for IT budgets heading into 2014 or for hiring. IT executives will need to continue transforming their operations and find ways to incorporate new, disruptive technologies to cut costs and improve productivity. As the Borg of Star Trek liked to say "resistance is futile." While transformation is a multi-year initiative, IT executives that do not move rapidly to cost-efficient "IT as a Service" environments may find they have put their organizations and themselves at risk.

System Dynamics is now for the rest of us!

by Stu Selip, Principal Consulting

That's great, but what is it? According to the System Dynamics Society

System dynamics is a computer-aided approach to policy analysis and design. It applies to dynamic problems arising in complex social, managerial, economic, or ecological systems -- literally any dynamic systems characterized by interdependence, mutual interaction, information feedback, and circular causalitySo, System Dynamics (SD) uses computers to simulate dynamic systems that are familiar to us in the realms of business and technology. OK that sounds great, but why am I writing about SD? A presentation by ViaSim Solution's (ViaSim) president J. Chris White and his ViaSim colleague Robert Sholtes at last Friday's InfoGov Community call piqued my interest, and I think SD will pique your interest too.

What do the big guys do with SD?

Chris and Robert mentioned the long-time application of SD in the Department of Defense (DOD). A quick Google search revealed widespread use ranging from scholarly articles about simulating the control behavior of fighter planes to pragmatic approaches to re-architecting the acquisition process of the DOD itself. It looks like the DOD appreciates System Dynamics. Here is a clip from that search. Large enterprises can make good use of SD too. In a 2008 Harvard Business Review (HBR) article entitled Mastering the Management System by Robert S. Kaplan and David P. Norton, the authors identify the concept of closed loop management systems in linking strategy with operations, informed by feedback from operational results.

Chris and Robert mentioned the long-time application of SD in the Department of Defense (DOD). A quick Google search revealed widespread use ranging from scholarly articles about simulating the control behavior of fighter planes to pragmatic approaches to re-architecting the acquisition process of the DOD itself. It looks like the DOD appreciates System Dynamics. Here is a clip from that search. Large enterprises can make good use of SD too. In a 2008 Harvard Business Review (HBR) article entitled Mastering the Management System by Robert S. Kaplan and David P. Norton, the authors identify the concept of closed loop management systems in linking strategy with operations, informed by feedback from operational results.

Various studies done in the past 25 years indicate that 60% to 80% of companies fall short of the success predicted from their new strategies. By creating a closed-loop management system, companies can avoid such shortfalls.Their article, which you can see here with the right HBR subscription, discusses how this might be done, without explicitly mentioning System Dynamics.

What about the rest of us?

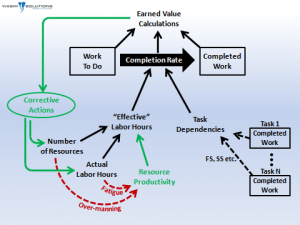

Most of the rest of us are involved in more mundane tasks than articulating corporate strategy. Many of us work on planning IT projects, with plans defined in Microsoft Project (MSP). How many MSP users have solid training in the tool? I'll wager that many practitioners have learned MSP by the "seat of their pants", never quite understanding why a small change to the options of a project plan has produced dramatically different timelines, or why subtle changes cause plans to oscillate between wildly optimistic, and depressingly pessimistic. The ViaSim team spoke directly to these points by introducing us to pmBLOX, an SD-empowered MSP-like project planning tool that addresses MSP issues that cause us to lose confidence and lose heart. In this graphic, developed by ViaSim, the black arrows indicate inputs normally available to MSP users. The key differences are shown in the green and red arrows. With pmBLOX, project planners may specify corrective actions like adding workers to projects that are challenged. In addition, project planners can account for project delivery inefficiencies resulting from fatigue, or excessive staffing. You can watch Chris explain this himself, right here. At last, we will be able to respect Brook's Law, the central thesis of which is "adding manpower to a late software project makes it later". Dr. Fred Brooks expanded on this at length in The Mythical Man-Month, published by Addison Wesley in 1975. When I asked Chris about his experience with the challenges of mythical man-month project planning and non-SD project planning solutions he told me

The ViaSim team spoke directly to these points by introducing us to pmBLOX, an SD-empowered MSP-like project planning tool that addresses MSP issues that cause us to lose confidence and lose heart. In this graphic, developed by ViaSim, the black arrows indicate inputs normally available to MSP users. The key differences are shown in the green and red arrows. With pmBLOX, project planners may specify corrective actions like adding workers to projects that are challenged. In addition, project planners can account for project delivery inefficiencies resulting from fatigue, or excessive staffing. You can watch Chris explain this himself, right here. At last, we will be able to respect Brook's Law, the central thesis of which is "adding manpower to a late software project makes it later". Dr. Fred Brooks expanded on this at length in The Mythical Man-Month, published by Addison Wesley in 1975. When I asked Chris about his experience with the challenges of mythical man-month project planning and non-SD project planning solutions he told me

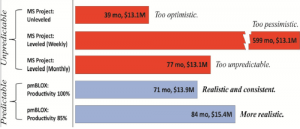

As we often see in the real world, sometimes "throwing people" at the problem puts the project further behind schedule. With these real-world corrective actions and productivity impacts, pmBLOX provides a framework for creating realistic and achievable plans. The biggest risk for any project is to start with an unrealistic baseline, which is often the case with many of today's simple (yet popular) project planning tools.

Here is an example of the output of MSP and the output of pmBLOX for an actual DOD project. You can see how a minor change in an MSP setting (leveling) made some dramatic and scary changes in the project plan. Notice that the output of pmBLOX looks much more realistic and stable with respect to project plan changes.

Here is an example of the output of MSP and the output of pmBLOX for an actual DOD project. You can see how a minor change in an MSP setting (leveling) made some dramatic and scary changes in the project plan. Notice that the output of pmBLOX looks much more realistic and stable with respect to project plan changes.

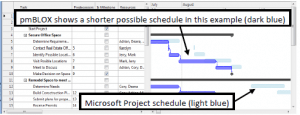

What about the learning curve for pmBLOX?

There is good news here. pmBLOX designers adopted the MSP paradigm and allow for direct importing of existing MSP-based plans. Here is an example of how pmBLOX looks, and how it depicts the difference between its plan and the one suggested by MSP. To my eye, the interface is familiar, so adopting and transitioning to pmBLOX should not be very difficult.

and how it depicts the difference between its plan and the one suggested by MSP. To my eye, the interface is familiar, so adopting and transitioning to pmBLOX should not be very difficult.

RFG POV:

System Dynamics (SD) is a powerful simulation approach for dynamic systems and pmBLOX puts some of that SD-power into the hands of project planners. While the ViaSim presenters talked about much more than pmBLOX, I thought the project management paradigm would make a good introduction point for discussing this interesting technology. In September, the ViaSim team will present again at an InfoGov Community call. At that meeting they will, among other topics, address how SD simulations are tested to give SD developers and users confidence in the results of their simulations. If you are an InfoGov member, you will want to attend. If you are not yet an InfoGov member, consider joining. -The Little Mainframe That Could

RFG Perspective: The just-launched IBM Corp. zEnterprise BC12 servers are very competitive mainframes that should be attractive to organizations with revenues in excess of, or expanding to, $100 million. The entry level mainframes that replace last generation's z114 series can consolidate up to 40 virtual servers per core or up to 520 in a single footprint for as low as $1.00 per day per virtual server. RFG projects that the zBC12 ecosystem could be up to 50 percent less expensive than comparable all-x86 distributed environments. IT executives running Java or Linux applications or eager to eliminate duplicative shared-nothing databases should evaluate the zBC12 ecosystem to see if the platform can best meet business and technology requirements.

Contrary to public opinion (and that of competitive hardware vendors) the mainframe is not dead, nor is it dying. In the last 12 months the zEnterprise mainframe servers have extended growth performance for the tenth straight year, according to IBM. The latest MIPS (millions of instructions per second) installed base jumped 23 percent year-over-year and revenues jumped 10 percent. There have been 210 new accounts since the zEnterprise launch as well as 195 zBX units shipped. More than 25 percent of all MIPS are IFLs, specialty engines that run Linux only, and three-fourths of the top 100 zEnterprise customers have IFLs installed. The ISV base continues to grow with more than 7,400 applications available and more than 1,000 schools in 67 countries participate in the IBM Academic Initiative for System z. This is not a dying platform but one gaining ground in an overall stagnant server market. The new zBC12 will enable the mainframe platform to grow further and expand into lower-end markets.

zBC12 Basics

The zBC12 is faster than the z114, using a 4.2GHz 64-bit processor and has twice the maximum memory of the z114 at 498 GB. The zBC12 can be leased starting at $1,965 a month, depending upon the enterprise's credit worthiness, or it can be purchased starting at $75,000. RFG has done multiple TCO studies on the zEnterprise Enterprise Class server ecosystems and estimates the zBC12 ecosystem could be 50 percent less expensive than x86 distributive environments having the equivalent computing power.

On the analytics side, the zBC12 offers the IBM DB2 Analytics Accelerator that IBM says offers significantly faster performance for workloads such as Cognos and SPSS analytics. The zBC12 also attaches to Netezza and PureData for Analytics appliances for integrated, real-time operational analytics.

Cloud, Linux and Other Plays

On the cloud front, IBM is a key contributor to OpenStack, an open and scalable operating system for private and public clouds. OpenStack was initially developed by RackSpace Holdings and currently has a community of more than 190 companies supporting it including Dell Inc., Hewlett-Packard Co. (HP), IBM, and Red Hat Inc. IBM has also added its z/VM Hypervisor and z/VM Operating System APIs for use with OpenStack. By using this framework, public cloud service providers and organizations building out their own private clouds can benefit from zEnterprise advantages such as availability, reliability, scalability, security and costs.

As stated above, Linux now accounts for more than 25 percent of all System z workloads, which can run on zEnterprise systems with IFLs or on a Linux-only system. The standalone Enterprise Linux Server (ELS) uses the z/VM virtualization hypervisor and has available more than 3,000 tested Linux applications. IBM provides a number of specially-priced zEnterprise Solution Editions, including the Cloud-Ready for Linux on System z, which turns the mainframe into an Infrastructure-as-a-Service (IaaS) platform. Additionally, the zBC12 comes with EAL5+ security, which satisfies the high levels of protection on a commercial server.

The zBC12 is an ideal candidate for mid-market companies to act as the primary data server platform. RFG believes organizations will save up to 50 percent of their IT ecosystem costs if the mainframe handles all the data serving, since it provides a shared-everything data storage environment. Distributed computing platforms are designed for shared-nothing data storage, which means duplicate databases must be created for each application running in parallel. Thus, if there are a dozen applications using the customer database, then there are 12 copies of the customer file in use simultaneously. These must be kept in sync as best as possible. The costs for all the additional storage and administration can make the distributed solution more costly than the zBC12 for companies with revenues in excess of $100 million. IT executives can architect the systems as ELS only or with a mainframe central processor, IFLs and zBX for Microsoft Corp. Windows applications, depending on the configuration needs.

Summary

The mainframe myths have misled business and IT executives into believing mainframes are expensive and outdated, and led to higher data center costs and sub-optimization for mid-market and larger companies. With the new zEnterprise BC12 IBM has an effective server platform that can counter the myths and provide IT executives with a solution that will help companies contain costs, become more competitive, and assist with a transformation to a consumption-based usage model.

RFG POV: Each server platform is architected to execute certain types of application workloads well. The BC12 is an excellent server solution for applications requiring high availability, reliability, resiliency, scalability, and security. The mainframe handles mixed workloads well, is best of breed at data serving, and can excel in cross-platform management and performance using its IFLs and zBX processors. IT executives should consider the BC12 when evaluating platform choices for analytics, data serving, packaged enterprise applications such as CRM and ERP systems, and Web serving environments.